Developers Who Stopped Growing in the Age of AI Coding

The brain doesn't remember what comes easy

The more you use AI, the harder it becomes to use AI well. That may sound counterintuitive, but I believe it’s true.

Everyone seems to be caught up in AI FOMO these days, working hard to get better at using AI. But before we get there, we need to ask what it actually means to “use AI well.”

Methodologies like agent orchestration or harness engineering are really just the tide — as tools improve, everyone catches up eventually. The real differentiator lies elsewhere. I think it’s the ability to judge whether AI’s output is good or bad, correct it when it goes in the wrong direction, and draw out better results. And ironically, that ability weakens the more we lean on AI.

There’s a saying: “Working for 10 years doesn’t give you 10 years of experience if you’re just repeating the same 1 year of experience 10 times.” It describes someone whose years stack up while their skills stay flat — where a 10-year developer and a 3-year developer are barely distinguishable in judgment.

This phenomenon existed long before AI arrived. But now that AI can take over the pain of writing code, falling into that loop of repeating the same one year of experience for a decade has become incomparably easier than before.

This AI dependency is a new version of an old problem: the developer who stops growing.

In a previous post, When AI Writes the Code, a Developer’s True Capability Is Revealed, I argued that the core competencies required of developers won’t change much in the AI era.

In this post, I want to ask a more fundamental question: why does depending on AI actually make it harder to use AI well? And what does neuroscience have to say about this?

The Paradox: You Need to Know Code to Use AI Well

What does it look like in practice to judge the quality of AI’s output? Plenty of people can already tell AI “build me this.”

But far fewer can look at what AI produced and say specifically: “this structure is brittle to change,” “this interface has two responsibilities,” “this level of abstraction doesn’t fit the current complexity of the project.” And the ability to make those specific critiques and corrections is exactly what separates using AI well from being dragged along by it.

That ability comes from a sense of what “good code” looks like. The feeling a seasoned developer gets when looking at code — that vague “something feels off” — is formed through countless failures, debugging sessions, and refactors. It isn’t the kind of thing you can acquire purely through theory. It’s closer to intuition. This intuition only develops when a developer has personally suffered through bad structure, personally built good abstractions, and developed a feel for “this level is about right.”

This isn’t just about “humans still need to read code,” as I mentioned in the previous post. Even from the pure goal of using AI effectively, deep understanding of code is a non-negotiable prerequisite. Learning to use AI and learning code patterns aren’t competing alternatives — the latter is the foundation of the former. No matter how easy AI’s interface becomes, AI cannot grow your eye for judging the quality of its output.

To put it in one sentence: the developer who can best leverage AI is the developer who can evaluate code without AI. And if you only rely on AI, that evaluative judgment never develops.

Neuroscience shows this isn’t just intuition.

The Brain Doesn’t Remember What Comes Easy

To say you’ve truly “learned” something, it must be stored in long-term memory. If you understand it momentarily and forget it days later, that’s closer to consumption than learning.

Developers have probably had this experience: you read about a design pattern in a blog post, feel like you understood it completely — then a week later, when you run into a similar problem, you can’t remember a thing. Meanwhile, a pattern you struggled through and implemented yourself in a project stays vividly with you months later. This difference isn’t just individual variation; it stems from how the brain stores memories.

The process of forming long-term memory is a bit at odds with our intuition. Things we processed with difficulty and struggle tend to stick better than things we understood easily and smoothly.

UCLA cognitive psychologist Robert Bjork explained this with the concept of “desirable difficulties.” Bjork argued that when learning involves an appropriate level of challenge and resistance, short-term performance slows down — but long-term retention and transfer actually improve.

One of the key mechanisms Bjork identified is retrieval practice. Practicing actively recalling something is far more effective for long-term memory formation than re-reading the same material.

Roediger and Karpicke’s 2006 study shows this quantitatively.

The experiment split students into two groups. One group repeatedly read the same material; the other read it and then practiced recalling the content themselves. Five minutes after the test, the re-reading group scored higher. But when retested a week later, the retrieval practice group’s retention was about 50% higher.

What does this tell us? Passively receiving information and actively retrieving it may look similar in the short term, but they produce entirely different effects over time.

This difference is also confirmed at the level of brain activation. The group that actively recalled what they learned showed strengthened connectivity between the hippocampus and prefrontal cortex, and increased activation in the sensorimotor network and insular cortex.

The hippocampus is the key region for forming new memories, and the prefrontal cortex handles higher-order thinking and decision-making. When both regions activate simultaneously and communicate closely, it means the brain isn’t just storing information — it’s weaving it together with context.

The group that passively listened to lectures, by contrast, showed primarily activation of the connection between the hippocampus and the fusiform gyrus. The fusiform gyrus handles visual processing, particularly pattern recognition. In other words, a brain in passive learning mode is essentially watching information rather than processing it.

Put simply: the harder your brain works to take in and process something, the more durably it gets stored. Information received effortlessly disappears just as effortlessly.

One caveat: this doesn’t mean “harder is always better.” What Bjork emphasizes is desirable difficulty — challenges that are within reach of your current abilities. Too easy and there’s not enough load for it to stick; too hard and you’re just left with frustration. The most effective learning comes from solving appropriately difficult problems under your own power. Remember this point — we’ll come back to it when we talk about how to use AI.

The Generation Effect and the Illusion of Fluency

The “generation effect” from Slamecka and Graf’s classic 1978 study deserves mention here too. Participants were split into two groups. One group was shown complete word pairs — for example, “hot–cold” — as-is. The other group was given a prompt like “hot–co___” and had to complete the word themselves. The difference was just filling in a few letters, yet the results were quite different: the group that filled in those letters themselves showed meaningfully higher retention.

The neuroscience behind this difference makes it more interesting. When you generate information yourself, the brain’s semantic processing regions, retrieval pathways, and executive control areas all activate simultaneously. The more brain regions involved at once, the richer the memory encoding, and the more pathways available later for retrieval. Passively reading completed information, by contrast, mainly activates recognition networks — so the encoding is comparatively shallow.

There’s a trap to watch out for here: what cognitive psychology calls the “illusion of fluency.” The feeling that you can process information easily leads to the mistaken belief that you’ll remember it well.

Reading a well-written, clear piece feels like understanding — but actual retention isn’t as high as that feeling suggests. The re-reading group in Bjork’s study scoring better right after is the same phenomenon: feels like mastery in the moment, but it doesn’t last.

How the Ability to Judge Code Gets Built

The desirable difficulties and generation effect described above are general learning principles. To understand how they specifically operate in coding, we first need to look at what form coding judgment takes in the brain.

A significant part of what we call coding skill is procedural memory — memory of “how to do something.” Like riding a bike, playing an instrument, or typing: once embodied, it executes automatically without conscious recall.

In coding, procedural memory shows up as: the solution pattern that naturally surfaces when you see a certain type of problem; the gut feeling that “something’s off here” when reading code; the instinctive sense of where to draw the boundary when structuring a design; the eye that spots in an instant where refactoring is needed.

These are a completely different type of memory from the explicit knowledge you get from reading a textbook. Knowing how to list the SOLID principles and being able to detect a Single Responsibility violation the moment you see code — these are different types of knowledge handled by different brain regions.

Three Stages of Procedural Memory Formation

Anderson’s Adaptive Control of Thought model describes procedural memory formation in three stages.

The first is the cognitive stage. Everything is executed consciously, step by step. Think back to when you first learned to code — how you had to think through each part of a for loop: initial value, condition, increment. At this stage, a significant portion of working memory is consumed by this process.

The second is the associative stage. Individual procedures start to integrate. You can execute the for loop, array access, and conditional branching as a single flow. Mistakes decrease and recognition speeds up.

The third is the autonomous stage. Many things execute automatically, consuming almost no working memory. Just as a seasoned developer writing filtering logic doesn’t consciously think about for loop syntax, the pattern itself is auto-recognized as a single unit. A developer who reaches this stage doesn’t spend working memory on syntax or basic patterns — freeing that capacity for design judgment and structural thinking.

The critical point is that these stages only progress through repeated practice. Moving from the cognitive to the associative stage requires personally putting load on the brain by solving the same types of problems repeatedly. Moving from the associative to the autonomous stage requires even more repetition.

The brain’s basal ganglia and cerebellum play key roles in this process. The basal ganglia handles habit formation and procedural learning; the cerebellum aids in the refinement and automation of action.

Through repeated practice, the synaptic connections in these regions are continuously strengthened — a process called long-term potentiation. As these connections strengthen, execution of a given procedure becomes faster and more accurate, eventually happening automatically without conscious effort.

When this process advances far enough, neural efficiency emerges. An expert’s brain activates fewer regions to perform the same task than a novice’s — one of the most consistently confirmed findings in neuroscience research. Doing less while producing better results: that’s roughly the neurological reality behind the abstract concept we call intuition.

So the ability to make quick, sound judgments without conscious reasoning is the product of enormous repetition and struggle — and there are no shortcuts in this process.

This is similar to physical training. To build strength, an athlete has to load the muscle. Lift something appropriately heavy — not too light, not too heavy — and the muscle undergoes micro-damage and grows, until heavier weights become possible. The brain works the same way. Growth happens when you wrestle directly with the edges of your current ability.

Experts Can Pack More into a Single Chunk

Chunking is another essential concept when explaining expertise in neuroscience.

Human working memory is limited to about 3–4 chunks — and that limit is the same for novices and experts. The number of working memory slots can’t be expanded through training.

So where does the difference between an expert and a novice come from? The answer is that the amount of information that can fit in a single chunk differs.

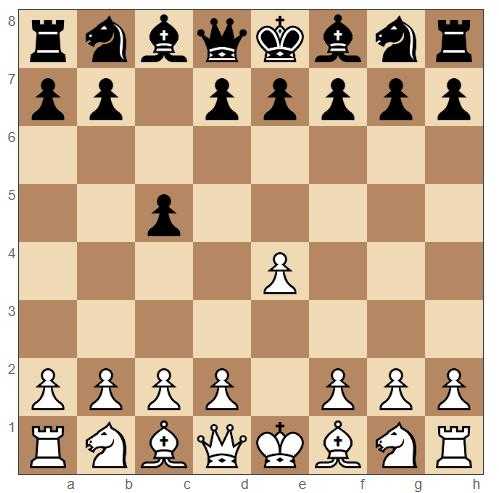

Chase and Simon’s study of chess experts demonstrates this. Researchers showed master and novice chess players a plausible mid-game board position for 5 seconds, then had them recreate it from memory. Masters reconstructed far more piece positions accurately. That much could be explained by “better memory.”

But the decisive contrast experiment came when pieces were placed randomly: the difference in reconstruction ability between masters and novices nearly disappeared. What masters had memorized wasn’t individual piece positions — it was meaningful patterns. Recognizing something like “a typical Sicilian Defense middle-game setup” as a single chunk allowed the same 3–4 working memory slots to hold vastly more information.

The Sicilian Defense is a famous counter-opening responding to White's aggressive opening

The Sicilian Defense is a famous counter-opening responding to White's aggressive opening

The same mechanism operates in coding. A skilled developer doesn’t process a for loop, array access, and conditional branch as separate pieces of information. They compress it into a single chunk: “filtering pattern.” They see try-catch, error type branching, and logging as a single chunk: “error handling pattern.”

So when looking at the same code, there’s more working memory left over — and that surplus can go toward higher-level judgments: “Is this function’s scope of responsibility too wide?” “Is this dependency direction right?” These structural questions can receive cognitive resources.

How are these chunks formed? By writing code yourself, debugging, refactoring — repeatedly encountering the same patterns until the brain starts grouping them into a single unit. Recent research has confirmed that the prefrontal cortex and basal ganglia circuitry work together in this process.

Knowing a pattern and having a pattern embodied are different things. The former is explicit memory; the latter is procedural memory. You need the latter to quickly catch structural problems in a code review.

The difference between the two shows up starkly in real work. Someone who can answer “why does useEffect need a cleanup function?” with textbook perfection might still miss the problem when they see a component like this in a code review:

function useNotificationCount({ userId }: { userId: string }) {

const [count, setCount] = useState(0);

useEffect(() => {

const interval = setInterval(async () => {

const notifications = await fetchNotifications(userId);

setCount(notifications.unread);

}, 5000);

}, []);

return count;

}There are two problems here. Without clearInterval, the interval keeps running after the component unmounts. And with userId missing from the dependency array, the component keeps polling the previous user’s notifications even after userId changes.

They know as explicit knowledge that “useEffect should return a cleanup function and the dependency array must be filled correctly” — but the procedural memory that makes you reflexively sense “interval isn’t being cleaned up here” and “userId isn’t in deps” when you look at the code hasn’t formed. That intuition only develops in someone who has personally encountered a stale closure bug, debugged an interval still alive after unmount, and traced the cause.

To be clear, most studies cited here were conducted in general learning contexts, not coding specifically. There are limits to directly applying these findings to coding as a specialized cognitive activity. But desirable difficulties, the generation effect, and the principles of procedural memory formation are closer to general features of human cognition than domain-specific rules — and there’s currently no basis to treat coding as an exception.

AI Interferes with This Process

When you hand implementation to AI, you skip the process of personally thinking through and writing code. What variable to name it, what order to unpack this logic, how to define this function’s interface, where to handle errors. These small but recurring judgment calls are actually the core process of internalizing code patterns — and the moment AI takes over that process, the cognitive load on your brain drops sharply.

AI Takes Over the Essential Load Too

Reduced cognitive load isn’t inherently bad. According to cognitive load theory, what matters in learning is the type of load. Sweller divided cognitive load into three kinds: intrinsic load from the complexity of the task itself; extraneous load from poor learning design; and germane load, which directly supports schema building.

If what AI reduces is extraneous load — writing boilerplate, correcting syntax errors — it can actually help learning. But if AI also reduces germane load, the opportunity for the brain to build schemas disappears entirely.

The problem is that in practice, it’s hard to cleanly separate these two types of load. When you tell AI “implement this function,” AI doesn’t just write the boilerplate — it makes the design decisions for the core logic too: what data structure to use, what order to process things in, how to handle error cases. These judgments are exactly what constitutes germane load. Delegate all of this to AI and almost nothing is left for the brain.

From the procedural memory perspective: when AI handles implementation, less time is spent struggling through code directly in the cognitive stage — so the transition to the associative stage is delayed, and reaching the autonomous stage becomes harder.

The same applies to chunking. Without personally constructing and combining patterns, you can recognize individual patterns in the completed code you read, but you lack the experience of compressing them into your own chunks. It’s like reading chess theory books without ever playing a real game.

For junior developers in particular, this can be severe. When many patterns are still in the cognitive stage and AI lets you skip that stage entirely, you may be turning out results quickly on the surface while nothing in the way of procedural memory is forming inside.

AI writing code for you is close to the generation effect experiment’s condition of reading a completed word pair. The code is right in front of you, so it feels like you understood it. You can nod along, following the logic: “oh, so that’s how you do it.”

But because you didn’t generate it yourself, it doesn’t get deeply imprinted in memory. Next time you encounter a similar problem, you’re likely to hit “I know I’ve seen this somewhere but can’t remember” — the illusion of fluency at work again.

Of course, reading AI-generated code isn’t entirely passive. Parsing the intent and tracing the execution flow does require some cognitive effort.

But research has confirmed that the level of brain activation when reading code decreases as developers become more skilled — the phenomenon called neural efficiency, which means experts process code faster with fewer cognitive resources. Processing efficiently also means that much less cognitive load.

In other words, a skilled developer reading AI output doesn’t put nearly as much load on the brain as you might think.

And for a novice developer: even if reading code does generate load, that load is spent on understanding the code, which is qualitatively different from the load generated in the process of producing it. Reading alone may simply not generate enough load to embody a new pattern.

Some readers might wonder whether deeply analyzing and reviewing AI-generated code is sufficient learning on its own. There’s something to that.

But there’s a trap: what neuroscience identifies as the core of learning — prediction and feedback — is missing. When you write code yourself, you’re constantly predicting what comes next, receiving feedback on whether it works, and modifying synapses accordingly. Reading already-completed AI code, by contrast, is post-hoc interpretation with the prediction step removed.

It’s like solving a math problem with the answer key open beside you. You can follow the solution and understand it, but in an actual exam facing a blank page, the pen doesn’t move — because the circuit for recognition and the circuit for retrieval are different. The total accumulated pain of having decided the details yourself determines the density of the chunk.

The Friction Keeping You on the Growth Path Is Gone

Let’s step back and look at the bigger picture. The problem of stopped growth existed before AI. Let’s examine what “repeating one year of experience 10 times” actually looks like in practice.

The developer who only copies and pastes answers from Stack Overflow. The developer who knows their framework’s API but doesn’t know what’s happening underneath. The developer who’s been writing the same CRUD code for five years and only accumulating seniority. What these people have in common is that they didn’t put sufficient load on their brains.

When copied Stack Overflow code runs, they stop there. They don’t ask why it works, whether there was another approach, or what tradeoffs this pattern carries. Working only above the abstractions a framework provides means the chunks for the underlying mechanisms never form. Repeating the same code patterns does lead to the autonomous stage — but when the range of patterns you’ve automated is narrow, you’re helpless in front of a new problem.

The mechanism operating in all these cases is the same: avoid cognitive load, procedural memory doesn’t form, chunks don’t get built, growth stops. The path to stopped growth was already wide open before AI.

But AI has dramatically reduced the friction on that path. In the Stack Overflow era, you still had to search, compare multiple answers, and adapt them to your situation. That process imposed at least minimal cognitive load. Even relying on a framework, you had time to read the docs and work through examples.

AI, given the context, produces complete code immediately. No searching, no comparing, no adapting. The distance to “working code” has become nearly zero.

From a productivity perspective, this is a revolution. But from a learning perspective, it means fewer opportunities for the brain to be loaded. Before AI, even choosing the “easy path” involved some friction. AI has eliminated even that friction. Sliding into the path of stopped growth is now far easier than before.

This problem doesn’t spare you just because you’re senior. The shape of it is different, that’s all. It’s worse for junior developers, who haven’t yet formed sufficient procedural memory — AI letting you skip that formation process means building career years on top of a foundation of weak fundamentals.

It’s like asking someone still learning to drive to evaluate the decisions of a self-driving car. Someone who has never held the steering wheel will struggle to detect a self-driving mistake.

But seniors aren’t off the hook either. Technology keeps changing, and every time a new language, framework, or paradigm appears, you encounter new patterns your existing chunks can’t handle.

To embody these new patterns, you have to start again from the cognitive stage. Being senior doesn’t exempt you from that. Everyone is a beginner when learning something new.

The advantage of a senior is being able to form new chunks faster by connecting them to existing abstractions — “this is similar to the middleware pattern on the backend.” But even that connection is discovered by actually getting hands-on with the code, not automatically discovered by reading AI output.

Regardless of seniority, you need time to personally think through problems and touch code in the AI era. So how do you create that time while still using AI?

How to Load the Brain

The core principle for growth comes down to one thing: you have to load the brain.

Drafting your own design before handing anything to AI is an intentional application of the generation effect. Because you’ve already formed your own answer, you’re not passively consuming AI output — you’re comparing and evaluating. In the process of judging “what’s different from my design,” “is AI’s choice better or is mine,” the brain’s semantic processing and executive control regions activate simultaneously. The same mechanism shown in generation effect research.

Taking code review seriously works the same way. Waving it through with “it works, so pass” puts almost no load on the brain. But the moment you consciously ask “why this structure?” or “if we had to modify this code six months from now, where would the problem be?”, the brain starts actively using working memory. That it’s annoying and time-consuming is exactly the point — that annoyance is what Bjork called desirable difficulty.

Carving out time to actually write code yourself is irreplaceable from the perspective of procedural memory formation. As Anderson’s model shows, moving from the cognitive to the associative stage, and from the associative to the autonomous stage, requires repeated practice. Reading doesn’t trigger these transitions.

That’s why 30 minutes of struggling through code yourself leaves it more deeply ingrained in memory than code AI generated in 3 seconds. When you’re stuck and making no progress, asking AI for a hint is a valid strategy — but the key is getting only the minimum information, not the whole answer. Getting the answer handed to you versus working it out yourself from a hint puts entirely different loads on the brain.

This is precisely the point of desirable difficulty: too easy and no load is applied; too hard and only frustration remains. Use AI to lower the barrier to entry, while making the core judgment calls yourself — that’s how you maintain the right level of difficulty.

What all these practices have in common is that they’re all annoying. From a pure productivity standpoint, they’re inefficient. They feel like taking the long way around something AI could handle quickly.

Yes, it'll be annoying and stressful — but that kind of stress is necessary.

Yes, it'll be annoying and stressful — but that kind of stress is necessary.Great workout

The optimal strategies for production and learning are different. AI is an excellent tool for production, but limited as a tool for learning. And in the long run, what remains in the brain determines the quality of your code reviews, the accuracy of your design judgment, and — paradoxically — how well you can use AI.

When these exercises accumulate, they build an eye. An eye that, the moment it sees AI-generated code, senses what’s wrong and where a better choice could be made. That’s the concrete substance of the “ability to judge and correct output” I described at the start.

Pain Is Not a Choice — It’s a Condition

The concern in this post isn’t “AI is bad.” Developers whose growth stopped existed before AI, and they’ll exist without it.

The core point is a structural constraint built into how the brain works: avoid cognitive load, and growth stops. AI is simply a tool that has made that avoidance unprecedentedly easy.

I think this is a structural problem, not a matter of individual willpower. Without consciously designing a structure in which you can still grow while using AI, drifting toward the comfortable option is human nature.

The brain is fundamentally wired to conserve energy. If the same result can be achieved with less effort, the brain will naturally choose that. This isn’t weakness of will — it’s how the brain is built.

That’s why the real question shouldn’t be “to use AI or not” but “how do I load the brain while using AI?” There’s a vast difference between working comfortably and working only comfortably.

Pain is something we want to avoid — but from the perspective of growth, it’s less of a choice and more of a condition. No matter how far AI advances, this condition doesn’t change. Not as long as the way the brain works doesn’t change.

And in an era where those who use AI well survive, the paradox is that the power to do so comes from the ability to judge without AI.

관련 포스팅 보러가기

How Developers Survive Through Learning

EssayWhen AI Writes the Code, a Developer's Real Skills Show

essayWhy Do We Feel Some Code Is Easier to Read?

ProgrammingHow to Find Your Own Color – Setting a Direction for Growth

EssayWhy I Share My Toy Project Experience

Essay/Soft Skills